Energy Supply Constraints Are a Real Headwind

Why energy capacity is a real structural issue, not a cyclical nuisance, and where the opportunity lives

Energy is no longer a cyclical nuisance. It is becoming a structural constraint on growth. It is not just an AI-driven story, demand for energy has always coincided with population and economic growth. As new technologies are invented, electricity has typically been needed to fuel the usage. Now with the advent of AI, the demand for energy is tenfold.

Demand Outlook

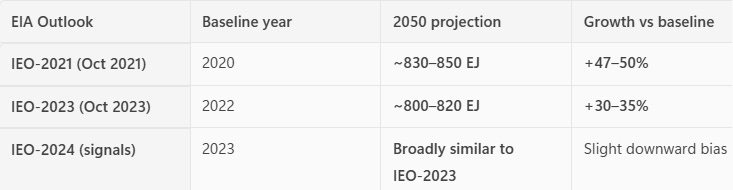

In 2021, the US Department of Energy projected world energy consumption would grow by 50% of 2020 levels by 2050. To be fair, 2020 saw a record drop in consumption due to the pandemic but this meant that global energy was going to climb from roughly 560 exajoule (EJ) to a projected ~830-850 EJ. Not necessary to understand how much this is exactly but this will help provide a base for rate of change discussed later.

According to the EIA’s International Energy Outlook 2021:

“Energy consumption continues to rise through 2050 in both OECD and non-OECD countries, largely as a result of increasing GDP and population. As standards of living increase, most notably in non-OECD Asian countries, demand for goods and the energy needed to manufacture those goods increase.”

In 2023 and 2024, the EIA revised the outlook.

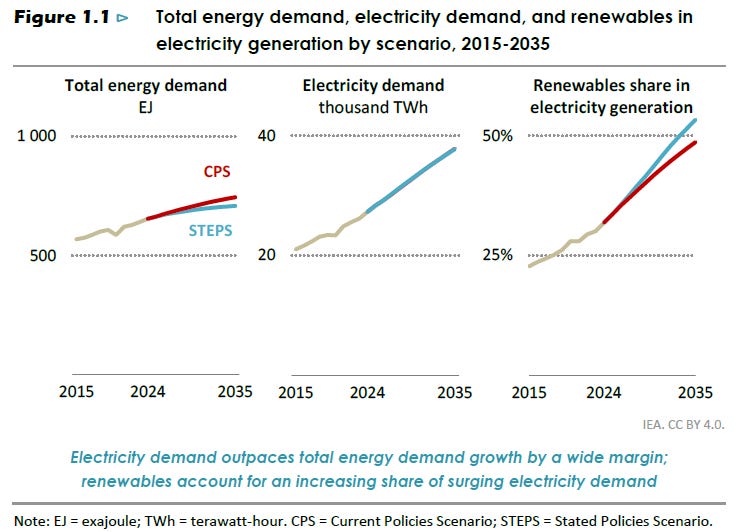

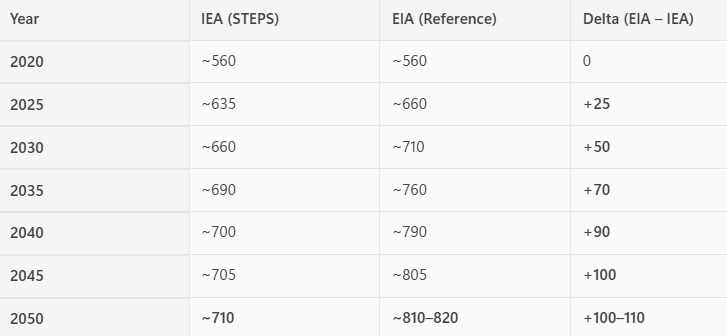

It is important to note; there are numerous institutions that exist with wide differences in projections. Their forecasts vary on policy assumptions, fuel mix changes, efficiency gains, and overall methodology. But I want to include the IEA, as I want to be clear the demand forecast is projected to increase significantly regardless of the forecasting model.

According to the International Energy Agency, as of 2024, global energy demand was ~650 EJ and by 2035 it will be 690 EJ and 2050 we’ll reach 710 EJ.

Here is a comparison table:

Either way, global demand for energy is expected to have increased 30-50% from 2020 levels by 2050, and 10-25% from today’s levels.

What is more important is that current estimates are most likely underestimating the increased demand from AI and AI-adjacent technologies. One key ingredient for AI to work, apart from LLMs and intelligent code, is energy. AI is far more energy-intensive than traditional computing, with a single query using 10–50 times more electricity than a standard web search.

It is not just the computation. Data centers require massive cooling systems, networking, and backup power, further increasing demand for energy.

There are a few reasons why this is being miscalculated:

First, most forecasts implicitly assume a linear scaling of compute demand, while recent trends suggest something closer to an exponential path. Training runs for frontier models have historically grown by orders of magnitude over relatively short timeframes, and there is little evidence this trend is slowing. If model capability continues to scale with compute, demand for energy may accelerate faster than currently embedded in baseline scenarios.

Second, estimates often rely on efficiency gains offsetting demand growth. While improvements in chip design (e.g., GPUs, ASICs) and data center optimization are real, history suggests that efficiency gains tend to be reinvested into higher performance rather than reducing total energy consumption, a dynamic similar to Jevons’ paradox1. In practice, lower cost per unit of compute may expand usage rather than constrain it.

Third, projections may understate the breadth of AI adoption. Current assumptions tend to focus on hyperscalers and large centralized data centers. However, as AI becomes embedded across enterprise workflows, consumer applications, and edge devices, aggregate compute demand could expand well beyond current centralized infrastructure forecasts.

Finally, there is a timing mismatch embedded in many projections. Announced data center capacity and power purchase agreements already point to a sharp increase in near-term electricity demand, suggesting that realized consumption could outpace modeled trajectories over the next several years.

Taken together, these factors suggest that even relatively bullish forecasts may represent a floor rather than a ceiling for AI-driven electricity demand.

Supply-Side Constraints

It does not take much data to support a simple point: demand for AI scales far faster than the supply chain required to support it.

This is not about the speed of inference, AI systems can generate responses almost instantaneously, despite the immense compute behind them. The constraint lies elsewhere: in the physical infrastructure required to deliver that compute.

Expanding AI capacity requires a long and complex chain of inputs: semiconductors, data centers, power generation, cooling systems, and grid infrastructure, each with its own bottlenecks. Fabrication plants take years to build and bring online. Data centers require land, permitting, and reliable access to electricity. Power supply, in turn, depends on generation capacity, transmission buildout, and in some cases fuel logistics, whether natural gas, uranium, or oil (see Strait of Hormuz crisis).

This stands in stark contrast to the demand side. Deploying AI is fast, distributed, and capital-light at the margin. A new application can be launched in days, and adoption can scale globally almost immediately. Multiply that dynamic across thousands of firms and millions of users, and demand for compute, and therefore electricity, can accelerate far more quickly than the underlying infrastructure can adjust.

The result is an inherent asymmetry: AI demand compounds on a software timeline, while supply responds on an industrial one.

That mismatch is the core of the constraint.

What This Means

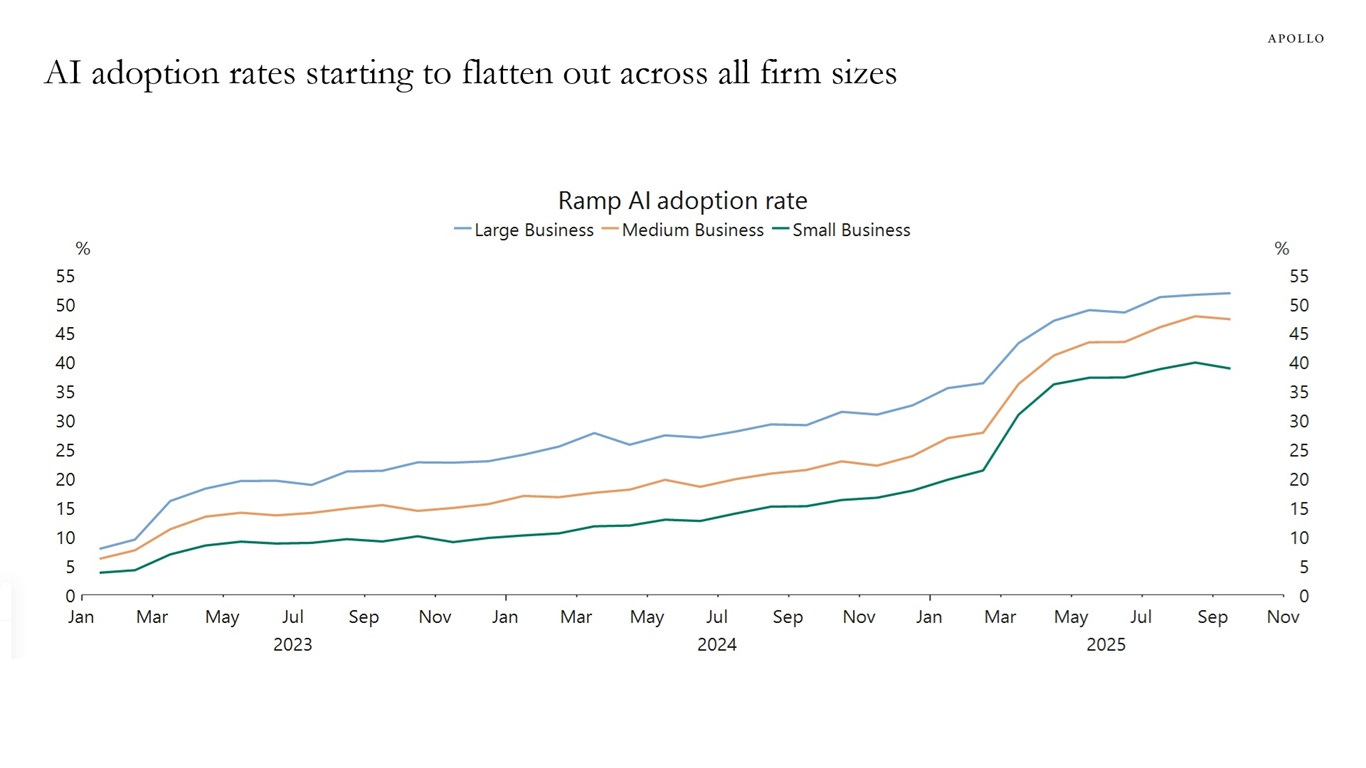

Now of course this relies on a rate of adoption to a new technology that could be argued is not showing up in the data. That is a fair counterargument, Torston Slok at Apollo has shown data suggesting this.

The Nobel Prize–winning economist Robert Solow said in 1987, “You can see the computer age everywhere but in the productivity statistics.” This observation is the so‑called Solow productivity paradox.

The same can be said today: AI is everywhere except in the incoming macroeconomic data.

Today, you don’t see AI in the employment data, productivity data or inflation data. Similarly, for the S&P 493, there are no signs of AI in profit margins or earnings expectations.

Maybe there is a J‑curve effect for AI, where it takes time for AI to show up in the macro data. Maybe not.2

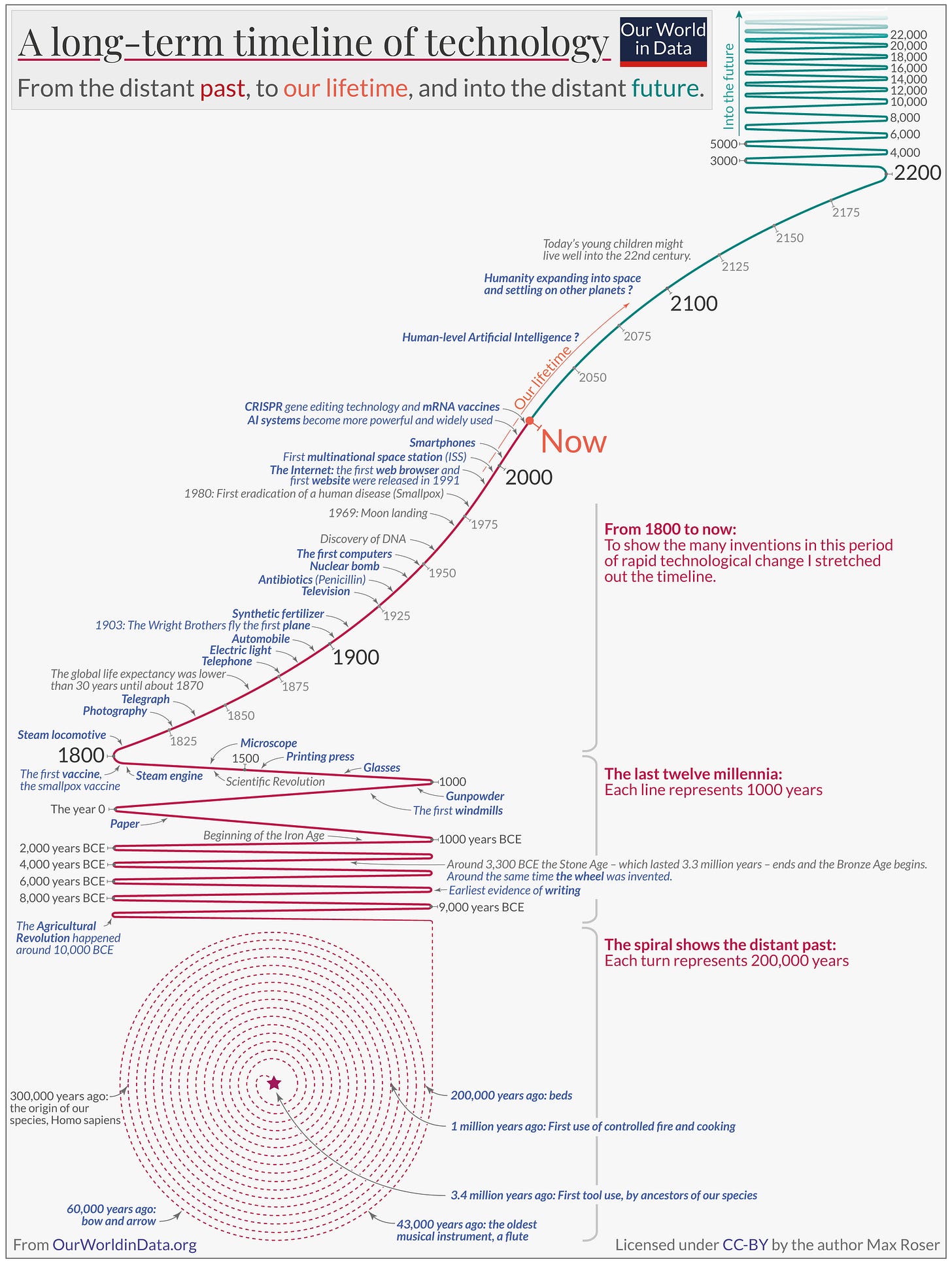

History suggests that technological change is not linear, but accelerative, with each wave of innovation increasing the pace of the next.

“The pace of technological change is much faster now than it has been in the past, according to Our World in Data. It took 2.4 million years for our ancestors to control fire and use it for cooking, but 66 years to go from the first flight to humans landing on the moon.”3

If AI adoption proves slower than expected, then the outlook remains familiar: energy demand rises alongside population and economic growth, and the challenge is simply scaling supply to meet a richer, more energy-intensive world.

But if AI continues on its current trajectory, scaling rapidly and diffusing across the economy, then the constraint shifts. In that world, the limiting factor is no longer innovation or capital, but the speed at which physical energy and infrastructure systems can expand.

Where the Opportunity Lives

If energy is transitioning from a cyclical input to a structural constraint, then the opportunity does not sit in the most obvious places, it sits in the parts of the system that are scarce, slow to scale, and increasingly indispensable.

First, it lives in power generation and firm capacity. As demand becomes more persistent and less elastic, the value of reliable, dispatchable energy increases. Not all megawatts are equal, intermittent supply does not solve for continuous, high-load demand from data centers and industrial usage. Assets that can deliver consistent power, particularly in constrained regions, may see both higher utilization and improved pricing dynamics.

Second, it lives in grid infrastructure. In many markets, the bottleneck is no longer generation but transmission. The ability to move electricity, from where it is produced to where it is needed, has become a binding constraint. Investment in transmission, distribution, and grid modernization is likely to be a necessary condition for any meaningful supply response.

Third, it lives in energy-adjacent infrastructure. Cooling systems, backup power, and data center buildout are not peripheral, they are integral to scaling compute. As AI deployment accelerates, these components move from supporting roles to critical infrastructure.

More broadly, the opportunity lies in recognizing that energy is no longer just a sector, it is becoming a cross-asset driver. Industries and companies with secured access to power may gain a structural advantage, while those exposed to rising and volatile energy costs may face increasing pressure.

The common thread is scarcity. In a system where demand can scale quickly but supply cannot, value accrues to the parts of the chain that are hardest to replicate and slowest to expand.

In this environment, the question is no longer who can build the best AI, but who can power it.

Published by Michele Chiacchio, CFA

Notes on the Market is a letter on investing, financial markets, and global macroeconomic and business conditions. It reflects ongoing research and investment thinking centered on long-term value creation and disciplined capital allocation.

If you would like to receive future letters, you may subscribe here. Prior editions are available in the archive. Extended research memos and the full archive are available to paid subscribers.

All letters are open for discussion. Comments and questions are welcome. You can leave one below or send me a direct message.

This letter is provided for informational and educational purposes only and does not constitute financial, investment, or economic advice. Investing involves risk, including the possible loss of principal. The views expressed are solely those of the author and do not necessarily reflect those of any affiliated organizations or employers.

Jevons Paradox is an economic phenomenon first observed by the British economist William Stanley Jevons in 1865. He noted that as steam engines became more efficient, Britain’s coal consumption actually increased instead of decreasing, contrary to the expectation that efficiency would reduce usage. The paradox arises because greater efficiency lowers the effective cost of using a resource, which can stimulate higher demand and offset the savings from efficiency improvements.

https://www.apolloacademy.com/waiting-for-the-ai-j-curve/

https://www.weforum.org/stories/2023/02/this-timeline-charts-the-fast-pace-of-tech-transformation-across-centuries/

Korea’s Yongin semiconductor cluster is a live case study of this constraint. Samsung and SK Hynix are building a single complex that needs multi-GW firm power in one of the most grid-congested corridors in the country. Generation capacity exists nationally, but the transmission infrastructure to deliver it to the site does not, and the buildout timeline is measured in years, not quarters. The bottleneck is exactly where you identify it: not generation but transmission.

What makes the Korean case sharper is that the grid operator is a state monopoly with no market mechanism to price congestion or incentivize demand-side flexibility. In ERCOT or PJM, locational pricing at least signals where the constraint binds. In Korea’s single-price pool, a semiconductor fab in a congested corridor pays the same tariff as one with surplus grid capacity. The structural constraint you describe is real, but how it manifests depends entirely on market design.